Blog

When a Product Grows Faster Than Documentation: How We Use AI to Maintain a Single Source of Truth

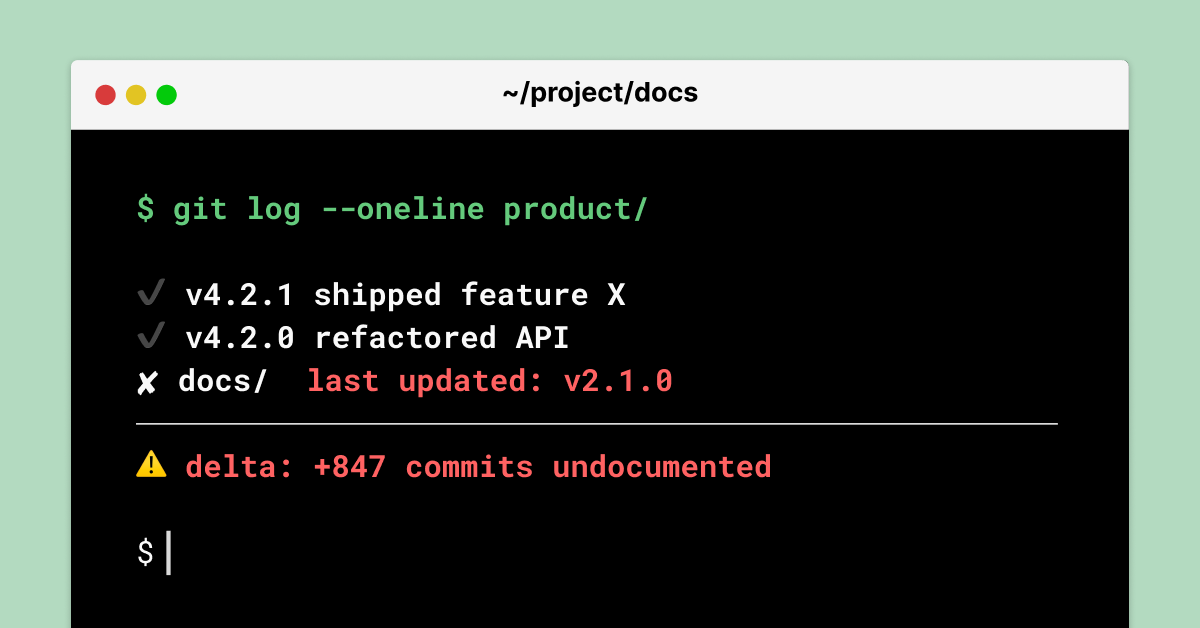

At a certain stage of product development, the same pattern starts to appear. Not because the team is making mistakes, but because the product is naturally growing. New features are added, processes change, teams expand. Development moves forward faster than documentation can be updated, design reacts to new needs, and documentation gradually turns into a historical record.

The result is an environment where no one is completely sure:

- whether the documentation reflects the current reality,

- whether the design in Figma matches production,

- and whether everyone on the team is looking at the same “source of truth”.

This is exactly the moment when we realized that the problem wasn’t the amount of documentation, but the lack of a system that could keep it consistent over time.

Documentation Is Not Text. It’s a Product System.

Product documentation is not a single document or a single tool. In reality, it’s an entire ecosystem spread across multiple systems.

It includes technical and functional specifications, UX and interaction descriptions, business rules, edge cases, and even the historical context behind decisions. If these layers don’t evolve together, chaos emerges - often surfacing later during onboarding, refactoring, or larger product changes.

Manually maintaining consistency between code, design, and documentation is unsustainable in the long run for a complex product. That’s why we decided to involve AI - not as a text generator, but as a systematic validation and comparison layer.

Why an AI Agent and Not “Just an AI Tool”

Traditional AI tools work reactively - they respond to prompts. That wasn’t enough for us.

We needed a solution that:

- works with real data from multiple systems,

- understands the difference between intent and reality,

- and can flag inconsistencies before they become production issues.

That’s why we designed a specialized AI agent whose role is not to create content, but to maintain product consistency.

Technology Foundation: Claude Code and MCP

The agent is built on Claude Code, which allows it to work with large contexts and perform analytical comparisons without losing connections.

A key part of the architecture is MCP (Model Context Protocol) - a layer that enables AI to safely and controllably work with real systems.

Through MCP, the agent has direct access to:

- design assets in Figma,

- product documentation in Confluence,

- source code in GitHub.

As a result, the AI doesn’t work with snippets or assumptions, but with the same system state the entire team works with.

A Unified Specification Template as the Basis for Scaling

To give the agent a clear framework, we created a unified product template for all specifications.

Every specification today follows the same logic:

it starts with a feature overview, continues with a description of real behavior, explains the design and UX intent from Figma, defines states and edge cases, describes business rules, and ends with open questions.

This structure is important not only for people, but also for AI.

The agent uses it as a reference and a control checklist. If a section is missing or unclear, the agent flags it and actively asks what needs to be added.

Documentation thus becomes a living process, not a passive record.

Figma as a Bridge Between Intent and Reality

In this system, Figma has a clearly defined role.

It is not the source of truth - it is the source of intent.

The AI agent compares:

- what is designed in Figma,

- what is implemented in code,

- and what is described in documentation.

Differences are not automatically labeled as errors. Instead, they are treated as signals that a decision needs to be made - and this is exactly where AI delivers the most value.

AI as a “Content Governance Layer”

We were inspired by an approach where AI functions as a governance layer over content.

In practice, this means the agent:

- checks terminology consistency,

- enforces documentation structure,

- identifies missing information,

- and performs a preliminary review before human review.

AI does not decide. AI highlights, summarizes, and asks questions.

Solution Architecture (High-Level)

The entire solution is built as a layered system:

- Source systems: GitHub (code), Figma (design), Confluence (documentation)

- MCP layer: secure connectors to individual systems

- AI agent layer: Claude Code + rules + templates

- Outputs: inconsistency reports, specification drafts, questions for the team

The agent never accesses systems directly - everything goes through MCP, ensuring access control and auditability.

What a Single Agent “Run” Looks Like in Practice

The process starts by selecting a scope - for example, a specific feature or flow.

The agent loads the rules and specification template, then requests data from GitHub, Figma, and Confluence via MCP. It normalizes the data, attempts to match entities (components, states, names), and then performs a comparison.

The result is not a finished solution, but a clear overview of differences, missing parts, and questions that need to be resolved by the team.

This agent is not the author of documentation.

It is a systematic partner that helps the team maintain order within complexity.

Business Value for the Company

From a business perspective, this approach brings higher trust in documentation, faster onboarding for new team members, reduced risk during changes, and better readiness for product scaling.

AI thus becomes not an experiment, but a stable part of product processes.

Conclusion

AI didn’t help us build the product instead of people - but it helped us keep the product understandable, even as it grows.

And that’s where we see its greatest value: as a tool that helps teams make better decisions on solid foundations.